Table of contents

- Quick answer: how to prioritize MVP features

- Why most founders struggle with prioritization

- Framework 1: MoSCoW - the simplest starting point

- Framework 2: RICE - when you need numbers

- Framework 3: Kano - what delights vs. what's expected

- User story mapping: seeing the whole picture

- Core vs. nice-to-have: the three questions test

- Saying no to feature creep (the hardest skill)

- Aligning features with business goals

- Practical prioritization workshop template

- Which framework should you use?

- How to find the right development partner for your prioritized MVP

- Sources

- Related reading

Your feature list is too long. I know this without seeing it, because every founder’s feature list is too long.

A founder came to us last year with 47 items. They’d spent three months collecting requests from potential users, advisors, and their own team. Every feature felt important. Every feature had a story behind it. I’ve been in this exact meeting more times than I can count.

We asked one question: “Which three of these would make someone pay you money on day one?”

Silence. Then: “I mean… all of them kind of contribute to that.”

No. That’s how you burn $120,000 building something nobody actually needs yet. The founders who ship successfully aren’t the ones with the longest feature list - they’re the ones who are ruthless about what to cut.

This guide is about scope decisions inside the MVP process: how to cut, rank, and sequence features once you already have an idea worth testing. For the full end-to-end build path, pair it with How to Build an MVP in 2026: Practical Founder Guide.

Quick answer: how to prioritize MVP features

Use this process:

- List every feature your team, users, or advisors have suggested.

- Define your riskiest assumption - the one thing that must be true for the product to work.

- Apply a scoring framework (MoSCoW for simplicity, RICE for data-driven teams, Kano for user-experience focus).

- Draw a hard line - only features that directly test your riskiest assumption or enable the core workflow make the cut.

- Create a “not now” list - everything else goes here, not in the trash, just in a parking lot for post-launch.

Most founders who follow this process cut 60-80% of their initial feature list - and ship 2-3x faster as a result.

Key takeaway: The goal of MVP prioritization is not to rank features - it’s to eliminate features. Every feature you cut is weeks saved and dollars preserved.

For the full MVP development process, see How to Build an MVP: Complete Guide for Founders and SMB Owners.

Why most founders struggle with prioritization

Before the frameworks, let’s name the actual problem: most feature lists are emotional, not strategic.

Features get added because:

- A potential user mentioned it once in an interview

- An advisor said “you’ll need this for investors”

- A competitor has it

- The founder personally wants it

- It seems easy to build

None of these are good prioritization criteria. A feature that a potential user mentioned once is an anecdote, not validation. A feature a competitor has might be their biggest regret. A feature that seems easy still has design, testing, and maintenance costs.

The real question for every feature: “If we don’t build this, can someone still complete the core workflow and get value?”

If yes, it’s not an MVP feature.

Key takeaway: If a feature isn’t required for a user to complete the core workflow and get value, it doesn’t belong in your MVP - regardless of who requested it.

Framework 1: MoSCoW - the simplest starting point

MoSCoW is the fastest framework to run. It works well when you need to make decisions quickly with a small team.

How it works

Put every feature into one of four buckets:

| Category | Definition | MVP rule |

|---|---|---|

| Must have | The product literally doesn’t work without this | Build it |

| Should have | Important but the product still functions without it | Build only if time/budget allows |

| Could have | Nice to have, improves experience | Post-launch |

| Won’t have (this time) | Explicitly out of scope for this version | Parking lot |

Running a MoSCoW session

- Print or display every feature on a card (physical or digital).

- For each feature, ask: “If we launch without this, does the core workflow break?”

- If yes, it’s a Must have.

- If no, ask: “Will users be frustrated without this, or just mildly inconvenient?”

- Frustrated = Should have. Mildly inconvenient = Could have.

- Anything that’s aspirational, speculative, or competitive-parity = Won’t have.

Common mistake: putting too many features in “Must have.” If more than 30% of your features are Must haves, you’re not being honest. A true MVP, typically has 5-8 Must have features, not 20.

MoSCoW example: project management SaaS MVP

| Feature | Category | Reasoning |

|---|---|---|

| Create a project | Must have | Core object |

| Add tasks to a project | Must have | Core workflow |

| Assign tasks to team members | Must have | Core collaboration |

| Due dates on tasks | Must have | Core scheduling |

| Email notifications | Should have | Useful but users can check manually |

| File attachments | Should have | Common need but not core |

| Gantt chart view | Could have | Visual nice-to-have |

| Time tracking | Could have | Adjacent feature, not core |

| Resource management | Won’t have | Enterprise feature |

| Custom workflows | Won’t have | Requires mature product understanding |

Key takeaway: MoSCoW is best for fast decisions. The discipline is keeping Must haves under 30% of total features - if everything is must-have, nothing is.

Framework 2: RICE - when you need numbers

RICE gives you a numeric score for each feature, which helps when you have stakeholders who need data to make decisions (or when your gut is pulling you in too many directions).

See how we apply these frameworks in practice. How we build MVPs →

How it works

Score each feature on four dimensions:

| Factor | What it measures | How to score |

|---|---|---|

| Reach | How many users will this affect in a given period? | Number of users (e.g., 100 users/quarter) |

| Impact | How much will this move the needle for those users? | 3 = massive, 2 = high, 1 = medium, 0.5 = low, 0.25 = minimal |

| Confidence | How sure are you about reach and impact estimates? | 100% = high, 80% = medium, 50% = low |

| Effort | How many person-weeks will this take? | Person-weeks (e.g., 2 weeks) |

Formula: RICE Score = (Reach x Impact x Confidence) / Effort

RICE example: B2B onboarding MVP

| Feature | Reach | Impact | Confidence | Effort | RICE Score |

|---|---|---|---|---|---|

| Self-serve signup flow | 200 | 3 | 90% | 3 weeks | 180 |

| Dashboard with key metrics | 200 | 2 | 80% | 4 weeks | 80 |

| CSV data import | 150 | 2 | 70% | 2 weeks | 105 |

| Team invitations | 80 | 1 | 60% | 2 weeks | 24 |

| Custom branding | 50 | 0.5 | 50% | 3 weeks | 4.2 |

| API access | 30 | 2 | 40% | 6 weeks | 4 |

The scores make it obvious: self-serve signup and CSV import are high-leverage. Custom branding and API access can wait.

When RICE works (and when it doesn’t)

Works well when: you have some user data, multiple stakeholders with competing priorities, or you need to justify decisions to investors.

Doesn’t work well when: you’re pre-launch with no user data (your Reach and Confidence estimates will be guesses), or when you have fewer than 10 features to evaluate.

Key takeaway: RICE forces you to quantify assumptions. Even if your numbers are rough estimates, the exercise of scoring Reach, Impact, Confidence, and Effort exposes which features are high-leverage and which are wishful thinking.

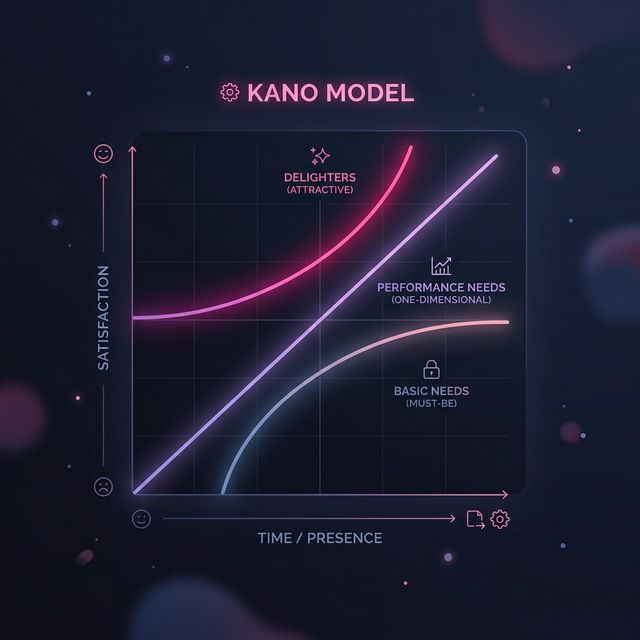

Framework 3: Kano - what delights vs. what’s expected

The Kano model is useful when you’re building something where user experience is a key differentiator - not just functionality but how it feels.

The five Kano categories

| Category | User reaction if present | User reaction if absent |

|---|---|---|

| Must-be (basic) | No reaction (expected) | Frustrated, angry |

| One-dimensional (performance) | Satisfied | Dissatisfied |

| Attractive (delight) | Delighted, surprised | No reaction |

| Indifferent | No reaction | No reaction |

| Reverse | Dissatisfied | Satisfied |

Kano for MVP prioritization

For an MVP:

- Must-be features: Build all of them. These are table stakes.

- One-dimensional features: Build the top 2-3 that align with your core value proposition.

- Attractive features: Pick ONE that creates a “wow” moment - this is your differentiation.

- Indifferent features: Cut all of them.

- Reverse features: Cut all of them (these actively hurt your product).

The power of Kano is that it helps you find that one “delighter” that makes people talk about your product - without over-building the basics.

How to run a quick Kano analysis

For each feature, ask potential users two questions:

- “How would you feel if this feature were included?” (functional question)

- “How would you feel if this feature were NOT included?” (dysfunctional question)

Options: I’d like it / I expect it / I’m neutral / I can tolerate it / I’d dislike it.

You can run this as a quick survey with 10-15 potential users and classify features based on the combination of answers.

Key takeaway: Kano helps you find the one “delighter” feature that creates word-of-mouth - plus all the table-stakes features users silently expect. For an MVP, build all must-be features, the top performance features, and exactly one attractive feature.

User story mapping: seeing the whole picture

Frameworks score individual features, but they don’t show you how features connect. User story mapping fixes that.

How to build a story map (1-hour workshop)

- Write the user’s journey across the top (left to right): Discover > Sign Up > First Action > Core Workflow > Get Result > Come Back.

- Under each step, list every feature that supports that step - one sticky note per feature.

- Arrange vertically by priority - essentials at the top, nice-to-haves lower.

- Draw a horizontal line - everything above the line is your MVP. Everything below is post-launch.

The line almost always cuts lower than you expect. Most founders find that the Core Workflow and Get Result columns need the fewest features to function - the bloat is, usually in Sign Up (too many fields), First Action (too much onboarding), and Come Back (too many notifications/engagement tricks).

Story mapping + framework = the combo that works

Use story mapping first, then score the features above the line with RICE or MoSCoW. This catches features that score well individually but don’t fit the core user journey.

Core vs. nice-to-have: the three questions test

When you’re stuck on a specific feature, ask these three questions:

The prioritization shortcut I use every time: Ask “Can a user complete the core workflow without this feature?” If yes, it’s not in v1. This single question has saved more founder money than any framework I’ve ever used.

- Can the user complete the core workflow without this? If yes, it’s nice-to-have.

- Will this feature take more than 1 week to build? If yes, it needs strong justification.

- Do we have evidence that users want this? (Not “will users like this” - “have users explicitly asked for this or demonstrated the need?”)

If a feature fails any two of these, it’s a post-launch feature. No debate needed.

Saying no to feature creep (the hardest skill)

Feature creep doesn’t announce itself. It sneaks in through:

Something I learned from watching founders: The ones who ship fastest aren’t the ones with the best ideas. They’re the ones who are most comfortable saying “not yet” to good ideas. Feature discipline is a muscle, not a talent.

- “This will only take a day” (it never takes a day)

- “Users will expect this” (based on what evidence?)

- “Competitors have this” (competitors also have years of development and data)

- “Can we just add one more thing?” (repeated 15 times)

How to say no without killing morale

Create a “Version 2 Board” - a visible, shared document where every rejected feature goes with a note about when it might make sense. This reframes “no” as “not now” and gives people a place to put their ideas without derailing the current sprint.

Rules for the V2 Board:

- Every feature that doesn’t make the MVP cut goes here.

- Features are reviewed after launch, when you have real user data.

- Anyone can add to the V2 Board at any time (keeps people feeling heard).

- Nothing comes off the V2 Board and into the current sprint without a formal review.

Key takeaway: Feature creep is the number one MVP killer. Create a “Version 2 Board” so every idea has a home - but none of them derail your current build.

For more on how much these scope changes can cost, see MVP Development Cost in 2026: Founder Pricing Guide.

Aligning features with business goals

Prioritization frameworks are tools - but they need to serve a strategy. Before scoring any feature, make sure you’ve answered:

- What is the one metric this MVP needs to prove? (e.g., “Users complete the core workflow at least 3 times in their first week”)

- Who is the specific user we’re building for? (Not “small businesses” - “marketing managers at 10-50 person agencies who currently use spreadsheets”)

- What is the one action we want users to take after trying the MVP? (e.g., “Upgrade to paid,” “Invite a team member,” “Share with a colleague”)

Every feature should trace back to one of these three answers. If it doesn’t connect, it doesn’t ship.

| Business goal | Feature aligns? | Ship in MVP? |

|---|---|---|

| Prove users complete core workflow 3x/week | Task creation + completion tracking | Yes |

| Prove users complete core workflow 3x/week | Custom themes and branding | No |

| Drive upgrades to paid | Usage limit on free tier | Yes |

| Drive upgrades to paid | Advanced analytics dashboard | No (build a simple counter first) |

| Encourage team invitations | One-click invite link | Yes |

| Encourage team invitations | Role-based permissions | No (everyone gets the same role for now) |

Practical prioritization workshop template

Here’s a step-by-step workshop you can run with your team in 2-3 hours.

Before the workshop

- Collect all feature requests into one list (no duplicates).

- Define your riskiest assumption and core metric.

- Invite the decision-maker, one developer, and one person representing the user perspective.

Workshop agenda (2.5 hours)

| Time | Activity | Output |

|---|---|---|

| 0:00 - 0:15 | Align on riskiest assumption and core metric | One sentence everyone agrees on |

| 0:15 - 0:45 | Story mapping: map the user journey | Visual map with features under each step |

| 0:45 - 1:15 | MoSCoW sort: categorize each feature | Features in 4 buckets |

| 1:15 - 1:30 | Break | - |

| 1:30 - 2:00 | RICE scoring on Must-have and Should-have features only | Ranked list with scores |

| 2:00 - 2:15 | Draw the MVP line: what ships, what waits | Final MVP scope |

| 2:15 - 2:30 | Effort estimation sanity check with developer | Confirm scope fits timeline and budget |

After the workshop

- Document the final MVP feature list.

- Create the V2 Board with everything that didn’t make the cut.

- Share both documents with everyone involved so there’s no revisionism later.

Key takeaway: A 2.5-hour prioritization workshop with the right people in the room can save you months of building the wrong features. Document everything - including what you said no to and why.

Which framework should you use?

| Situation | Best framework | Why |

|---|---|---|

| Small team, fast decision needed | MoSCoW | Simple, no math required |

| Multiple stakeholders with competing views | RICE | Numbers reduce opinion-based arguments |

| UX-driven product where experience matters | Kano | Identifies delighters vs. table stakes |

| Complex product with many user steps | Story mapping + MoSCoW | Shows how features connect in the journey |

| Investor pitch or board presentation | RICE + story map visual | Data-backed and visually clear |

Most founders we work with at Codivox use a combination: story mapping to see the big picture, MoSCoW for the first rough cut, and RICE to resolve any remaining debates.

How to find the right development partner for your prioritized MVP

Once you’ve defined your feature scope, you need a development partner who respects it - not one who tries to expand scope to increase their billing.

Red flags when evaluating agencies:

- They add features you didn’t ask for in their proposal.

- They can’t explain why a feature should be included.

- They quote a fixed price without understanding your prioritization decisions.

- They don’t ask about your success metric or riskiest assumption.

Green flags:

- They challenge your feature list and suggest cuts.

- They ask about your business goals before discussing technology.

- They propose phased delivery with a clear MVP-first milestone.

- They have a process for handling feature requests that come up mid-build.

For more on choosing the right partner, see How to Hire an MVP Development Agency.

FAQ

What’s the best feature prioritization framework for a first-time founder?

My advice: start with MoSCoW. It’s the simplest and forces the most important decision: “Does the product work without this feature?” You can layer on RICE or Kano later when you have more data and stakeholders.

How many features should an MVP have?

Most successful MVPs have 5-10 core features, not 25-40. The exact number depends on your product type, but if your MVP feature list is longer than one page, you’re probably building too much. I’d focus on the single core workflow.

How do I handle feature requests from investors or advisors?

Listen, document, and add them to your V2 Board - but don’t change your MVP scope unless the request reveals a flaw in your riskiest assumption. Investors and advisors bring valuable perspective, but they’re not your users.

Should I build features that don’t scale?

Yes, for an MVP. Manual processes, concierge workflows, and “things that don’t scale” are some of the best MVP strategies. They let you validate demand before investing in automation. Build the automated version in V2 once you’ve proven people want the outcome.

How often should I re-prioritize features during development?

Set a cadence: once every two weeks during active development. Review your V2 Board, check if any new information (user feedback, technical discoveries) changes priorities, and make adjustments. Avoid re-prioritizing daily - that’s just indecision disguised as agility.

What if my co-founder disagrees on feature priorities?

This is a strategy disagreement, not a feature disagreement. Go back to your riskiest assumption and core metric. If you disagree on those, resolve that first. Once you’re aligned on what the MVP needs to prove, feature priorities usually sort themselves out.

Sources

- Martin Fowler: Writing the MVP — Practitioner framework for scoping an MVP around a single riskiest assumption, foundational to the prioritization logic in this guide.

- CB Insights: The top reasons startups fail — Data on “no market need” and premature scaling that justifies ruthless feature cuts.

- Standish Group CHAOS research — Longitudinal evidence that 60–80% of delivered features are rarely or never used — the core case for aggressive “not now” decisions.

- Y Combinator library — YC’s collection of founder-level playbooks on scope discipline and staying focused on the core workflow.

- Lean UX and lean inception reference materials — Extended reference for the lean-inception approach this guide’s MoSCoW/RICE/Kano walkthrough sits on top of.

Related reading

- How to Build an MVP: Complete Guide for Founders and SMB Owners

- MVP Development Cost in 2026: Founder Pricing Guide

- How to Hire an MVP Development Agency

Feature prioritization isn’t a one-time exercise - it’s a discipline. The frameworks in this guide give you structure, but the real skill is the willingness to say “not now” to good ideas so you can ship the right ones first.

Ready to scope your MVP? See how we build →