Table of contents

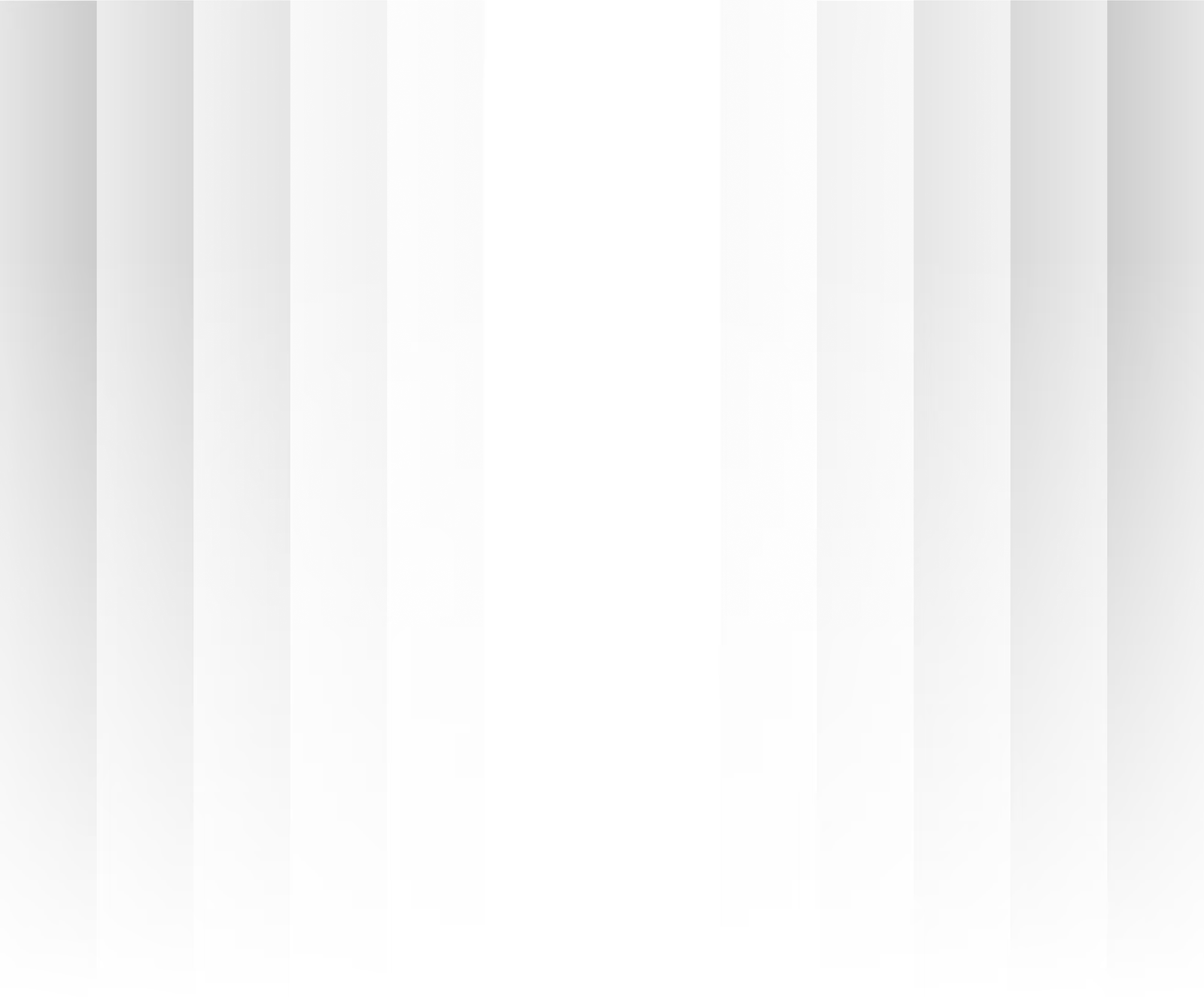

- Quick answer

- Problem validation vs solution validation

- Customer discovery interviews

- Landing page tests

- Waitlist strategies that actually work

- Fake door testing

- Competitor analysis framework

- Market sizing for SaaS

- Validating willingness to pay

- AI-accelerated validation: compressing months into days

- When to stop validating and start building

$95,000. Seven months. Three paying customers.

That’s not a horror story - it’s the median outcome for SaaS products that skip validation. The founder built a tool that automatically organized customer feedback into actionable themes. The product worked exactly as designed. Clean UI, reliable classification engine, Slack integration, weekly summary emails. Technically excellent.

They launched to their waitlist of 400 people. 65 signed up for the free trial. 11 completed onboarding. 3 converted to paid. $792 in MRR against a $95K investment.

The postmortem was painful but instructive. The founder had validated the problem (customer feedback is disorganized) but never validated the solution (an automated tool to organize it). When they finally did customer interviews, they discovered the real issue: most teams didn’t want automated categorization - they wanted a shared inbox where the team could manually organize feedback together. The collaboration was the value, not the automation.

They built the wrong solution to a real problem. Validation would have revealed it in 3 weeks and $500 instead of 7 months and $95,000.

This guide is about pre-build evidence: customer interviews, landing page tests, willingness-to-pay signals, and validation thresholds. It should reduce what you build, not tell you how to architect or scale the product after you start building. For tracking success post-launch, you can check out our guide to SaaS metrics & KPIs.

Quick answer

SaaS validation has two stages: problem validation (confirming people have the pain you think they have) and solution validation (confirming your proposed solution addresses the pain in a way people will pay for). In my experience, most founders skip the second stage. A complete validation process takes 4-8 weeks, costs $500-$3,000, and includes customer discovery interviews, a landing page test, and a willingness-to-pay experiment. Only build when you have evidence from all three - not when you feel confident.

For the build phase after validation, read How to Build a SaaS Product as an SMB and How to build an MVP.

Problem validation vs solution validation

This is, the most important distinction in SaaS validation, and where most founders go wrong.

Problem validation answers: Do people have this problem? Is it painful enough that they would pay to solve it? How do they currently handle it?

Solution validation answers: Does my proposed solution solve the problem in a way that is better than alternatives? Will people switch from their current approach to mine? At what price?

| Stage | What you are testing | Evidence required | Common mistake |

|---|---|---|---|

| Problem validation | Problem exists and is painful | 15+ interviews confirming pain, existing workarounds, frequency of occurrence | Asking “would you use this?” instead of “how do you handle this today?” |

| Solution validation | Your approach solves the problem | Landing page signups, willingness-to-pay signals, prototype feedback | Skipping this stage entirely and going straight to building |

You must complete both stages before building. I can’t emphasize this enough. Validating the problem alone is not enough - the feedback tool founder proved that. The problem was real. The solution was wrong.

Key takeaway: Problem validation and solution validation are two separate stages. Most failed SaaS products solved real problems with the wrong solutions. Validate both before writing a line of code.

Customer discovery interviews

Customer discovery interviews are the foundation of validation. They are not sales calls. They are not feature requests sessions. They are structured conversations designed to understand behavior, not opinions.

The validation test I run on every idea in 48 hours: I find 5 people who match the target customer and ask one question: “How are you solving this problem today?” If they describe an active, painful workaround, the problem is real. If they shrug and say “it’s not a big deal,” the idea needs rethinking - no matter how elegant the solution.

Who to interview

Interview people in your target ICP (Ideal Customer Profile) who actively deal with the problem you are trying to solve. Not friends. Not family. Not other founders (unless founders are your ICP). Not people who “might” have the problem someday.

Target: 15-25 interviews. Below 15, your sample is too small to identify patterns. Above 25, you are likely seeing diminishing returns.

Finding interview subjects

| Channel | Expected response rate | Best for | Cost |

|---|---|---|---|

| LinkedIn cold outreach | 5-10% | B2B professionals with specific job titles | Free (time only) |

| Online communities (Reddit, Slack groups) | 3-8% | Niche audiences, specific problem domains | Free |

| Personal network (second-degree connections) | 20-40% | Warm introductions to target personas | Free |

| Paid recruitment (UserTesting, Respondent.io) | 80%+ | Fast turnaround, specific demographics | $50-$150 per interview |

| Existing customer base (if pivoting) | 30-50% | Users who already experience the problem | Free |

The interview framework

Rule 1: Never ask “Would you use this?” People will say yes to be polite. Instead, ask about past behavior.

Rule 2: Talk about their life, not your idea. The interview is about their problem, not your solution. Your solution should not be mentioned until the last 5 minutes, if at all.

Core questions:

- “Tell me about the last time you dealt with [problem area]. Walk me through what happened.”

- “How often does this come up?”

- “What do you currently do to handle it?”

- “What’s the most frustrating part of how you handle it today?”

- “Have you looked for solutions? What did you find? Why didn’t you use them?”

- “If this were solved perfectly, what would change for you?”

Red flag answers (problem may not be real):

- “I guess it’s kind of annoying sometimes”

- “I haven’t really looked for solutions”

- “It’s not a huge deal”

Strong signal answers (problem is real and painful):

- “I spend 3 hours a week on this and it’s killing me”

- “I tried [Competitor A] and [Competitor B] and both fell short because…”

- “I built a spreadsheet for this but it breaks constantly”

Key takeaway: In customer discovery, past behavior beats future promises. Ask “What did you do last time this happened?” not “Would you use a tool that does X?” Opinions are unreliable. Actions are evidence.

Analyzing interview data

After 15-25 interviews, organize findings into patterns:

- Problem frequency: How do they encounter this? (Daily = strong signal. Monthly = weaker signal.)

- Current workarounds: What are they doing today? (Active workarounds = strong signal they will switch to a better option.)

- Willingness to change: Have they tried alternatives? (If they have tried and failed to find a solution, demand is strong.)

- Budget authority: Can this person or their team make purchasing decisions? (If not, you need to interview the actual buyer.)

If 80%+ of interviews confirm the problem is real, frequent, and painful, problem validation is complete. If fewer than 60% confirm, reconsider your hypothesis.

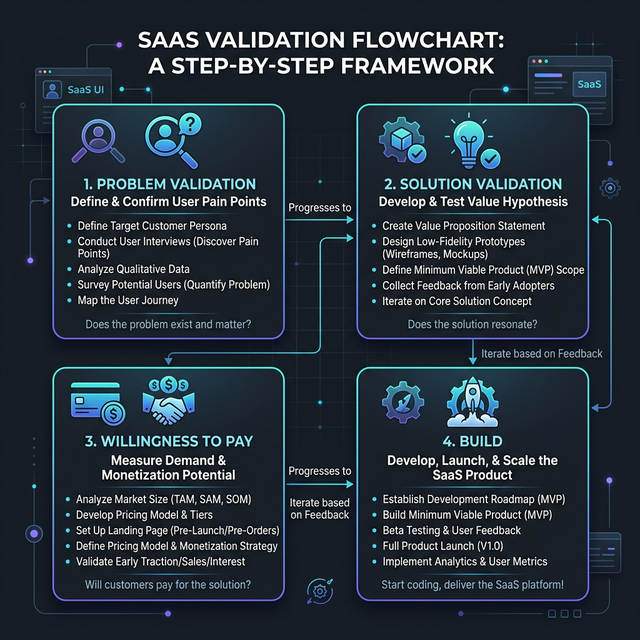

Landing page tests

A landing page test validates demand and messaging before you build anything. You create a page that describes your solution, drive targeted traffic to it, and measure conversion.

Ready to build after validation? See how we build MVPs →

What the landing page needs

- A clear headline stating the problem and your solution

- 3-4 bullet points describing what the product does

- A screenshot or mockup of the product (does not need to be real - can be a designed prototype)

- A prominent CTA: “Join the waitlist” or “Get early access” with an email capture form

- Trust elements: your name, company, brief bio

Traffic sources for landing page tests

| Source | Budget needed | Time to results | Quality of signal |

|---|---|---|---|

| Google Ads (search) | $500-$1,500 | 1-2 weeks | High - captures active intent |

| LinkedIn Ads | $500-$2,000 | 1-2 weeks | High for B2B - precise targeting |

| Reddit/community posts | $0 | 1 week | Medium - organic interest but self-selecting |

| Cold email with landing page link | $100-$300 (tooling) | 2-3 weeks | Medium - depends on list quality |

| Social media (organic) | $0 | 1-2 weeks | Low - biased by existing network |

Interpreting results

| Metric | Weak signal | Moderate signal | Strong signal |

|---|---|---|---|

| Landing page conversion rate | Under 3% | 3-5% | Over 5% |

| Waitlist signups (from 1,000 visitors) | Under 30 | 30-80 | Over 80 |

| Email reply rate to follow-up | Under 10% | 10-25% | Over 25% |

A landing page conversion rate above 5% from cold paid traffic is a solid signal. Below 3% means either your messaging is wrong or the demand is not strong enough.

Key takeaway: A landing page test with $500-$1,500 in paid traffic gives you a demand signal in 2 weeks. This is the cheapest way to validate demand before building. If you cannot get above 3% conversion from targeted traffic, reconsider your positioning or product concept.

Waitlist strategies that actually work

A waitlist is not just a list of emails. It is a validation tool that, when used correctly, tells you about commitment level, use case diversity, and willingness to pay.

Building an effective waitlist

- Ask a qualifying question at signup: “What’s your biggest challenge with [problem area]?” This tells you whether signups match your ICP and confirms the problem.

- Segment by urgency: Add a question like “When do you need a solution?” to identify high-intent prospects vs casual interest.

- Follow up personally: Email the first 50 waitlist signups manually. Ask them about their current workflow and what they would pay for a solution.

Waitlist size benchmarks

| Waitlist size | What it means | Next step |

|---|---|---|

| Under 50 | Interest is limited or marketing isn’t working | Reassess positioning, expand traffic sources |

| 50-200 | Moderate interest | Validate willingness to pay with a subset |

| 200-500 | Strong interest | Solid foundation for launch. Start building. |

| 500+ | High demand | Consider pre-selling or annual presale to fund development |

A waitlist of 200+ with qualifying responses that match your ICP is a strong enough signal to proceed to building - assuming willingness to pay is validated.

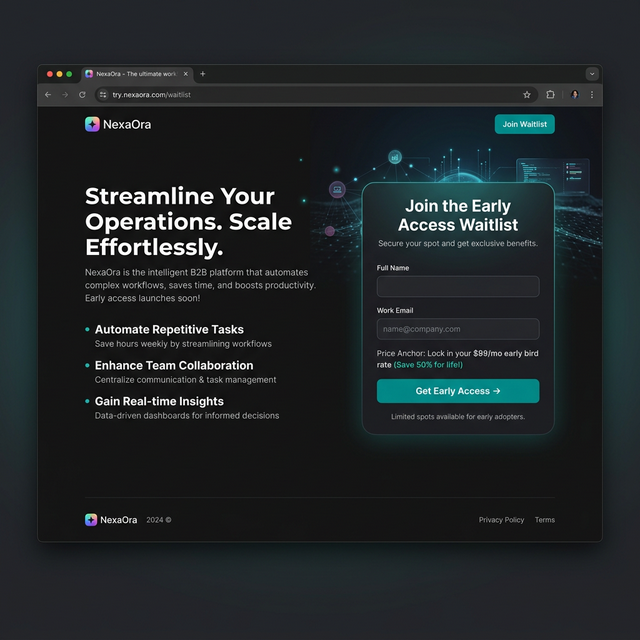

Fake door testing

Fake door testing means presenting a feature, product, or option that does not exist yet and measuring how many users attempt to use it. It is one of the fastest ways to validate specific features or product concepts.

How to run a fake door test

- Add a button, link, or menu item for the feature you want to validate

- When a user clicks it, show a message: “This feature is coming soon. Want early access?” with an email capture

- Track click-through rate and signup rate

When fake door tests work best

- Validating a new feature within an existing product

- Testing whether users want an integration or add-on

- Comparing demand for two different product ideas (A/B test the doors)

Ethical considerations

Be transparent. Do not let users believe the feature exists and then frustrate them. The “coming soon” message should appear immediately after the click, not after a loading screen or a multi-step form. And if someone signs up for early access, actually follow up when you build it.

Key takeaway: Fake door testing validates demand for specific features in days, not weeks. A 5%+ click-through rate on a feature door in an existing product is a strong signal to prioritize building that feature.

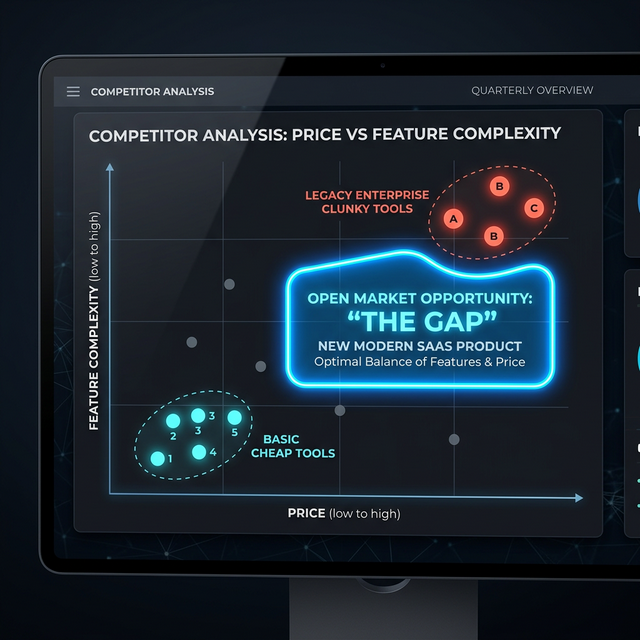

Competitor analysis framework

Understanding what exists in the market is not about copying - it is about finding gaps. Every successful SaaS product occupies a position that competitors do not serve well.

The competitor analysis matrix

For each competitor, document:

| Factor | What to evaluate | Where to find data |

|---|---|---|

| Positioning | Who they serve and how they describe themselves | Website, pricing page, G2/Capterra reviews |

| Pricing | Price point, model (per-seat, usage, flat) | Pricing page, sales conversations |

| Strengths | What reviewers praise most | G2 reviews (filter 4-5 star) |

| Weaknesses | Common complaints | G2 reviews (filter 1-2 star), Reddit, social media |

| ICP | Who their ideal customer is | Case studies, testimonials, content topics |

| Missing features | Commonly requested features they lack | Feature request forums, review complaints, support threads |

Finding your gap

The strongest SaaS positioning comes from one of three gaps:

- Underserved segment: Competitors target enterprise; you serve SMBs. Competitors target marketing teams; you serve operations teams.

- Workflow gap: Competitors solve part of the workflow but force users to use another tool for the rest. You cover the full workflow.

- Simplicity gap: Competitors are complex and require training. You are simple and self-serve.

If you cannot identify a clear gap, your product will compete on price - which is the weakest position in SaaS.

Market sizing for SaaS

Market sizing tells you whether the opportunity is large enough to build a business around. You do not need a McKinsey report. You need a sanity check.

Bottom-up market sizing (the useful approach)

- Identify your ICP: Be specific. “Marketing managers at B2B companies with 10-100 employees in North America.”

- Estimate the number of ICPs: Use LinkedIn Sales Navigator, industry reports, or census data. Example: 250,000 companies match.

- Estimate addressable percentage: Not all 250K are reachable or interested. Typically 10-20% are addressable. 250K x 15% = 37,500.

- Apply conversion assumptions: Realistic conversion from addressable to paying customer is 1-3%. 37,500 x 2% = 750 customers.

- Multiply by expected ARPU: 750 customers x $150/month = $112,500 MRR = $1.35M ARR.

Is $1.35M ARR enough to build a business around? For a bootstrapped SaaS, absolutely. For a venture-backed SaaS targeting $100M ARR, probably not.

Market size reality check

| Target ARR | Required market size | Typical viability |

|---|---|---|

| $500K-$2M | Niche market, 500-2K potential customers | Bootstrapped SaaS, lifestyle business |

| $2M-$10M | Mid-size market, 2K-20K potential customers | Profitable SaaS business, small team |

| $10M-$50M | Large market, 20K-100K potential customers | Growth-stage, may need external funding |

| $50M+ | Very large market, 100K+ potential customers | Venture-scale, requires significant investment |

Key takeaway: Bottom-up market sizing is more useful than top-down (“the global SaaS market is $300 billion”). Count real ICPs, apply realistic conversion rates, and calculate whether the opportunity supports your business goals.

Validating willingness to pay

This is the step founders skip most often, and it is the most important. People will tell you they have a problem and that they want a solution. They will not always pay for one.

Methods to validate willingness to pay

Method 1: Direct pricing question in interviews

After describing your solution concept, ask: “If this existed today and solved [problem] the way I described, what would you expect to pay for it monthly?”

Do not suggest a price first. Let them anchor. Their answer tells you:

- If they name a number: they see value and have a mental model for pricing

- If they hesitate: they are not sure it is worth paying for

- If they say “I’d need to see it first”: normal, but push gently - “ballpark, would this be a $50/month tool or a $500/month tool?”

Method 2: Pre-sale or letter of intent

Ask waitlist members to put down a deposit or sign a letter of intent for early access at a specific price. Even a $50 deposit validates willingness to pay far more than any survey.

Method 3: Price anchoring on landing page

Show a price on your landing page and measure conversion. “Join the waitlist for early access at $99/month” converts differently than “Join the waitlist for early access” (no price). The price-included version gives you a willingness-to-pay signal alongside demand validation.

Reading the signals

| Signal | Interpretation |

|---|---|

| 5+ interview subjects name a price close to your target | Strong willingness to pay |

| Interview subjects suggest a price 50%+ below your target | Your value proposition needs strengthening, or you are targeting the wrong segment |

| 10+ people (or ~10% of waitlist) put down pre-sale deposits | Build it. You have paying demand. |

| Nobody will commit any money upfront | Re-evaluate the value proposition and urgency of the problem |

Key takeaway: Pre-sales and deposits are the strongest validation signal. If someone will pay $50 for a product that does not exist yet, demand is real. If nobody will commit money, adjust your concept before building.

AI-accelerated validation: compressing months into days

The validation timeline has changed dramatically in 2026. Tools like v0, Lovable, and Bolt now let you build a functional prototype in hours, not weeks. This compresses the solution validation phase from “build a clickable mockup over 2–3 weeks” to “generate a working app in an afternoon and put it in front of users the same day.”

What this changes about the validation process:

| Validation step | Traditional timeline | AI-accelerated timeline | Cost |

|---|---|---|---|

| Customer discovery interviews | 2–3 weeks | 2–3 weeks (no shortcut here) | Free |

| Landing page test | 1–2 weeks to build + 2 weeks to run | 1 day to build + 2 weeks to run | $200–$500 in ads |

| Clickable prototype | 2–4 weeks | 1–3 days | $500–$2,000 |

| Functional MVP for user testing | 4–8 weeks | 1–2 weeks | $2,000–$10,000 |

| Total validation cycle | 8–16 weeks | 4–6 weeks | $500–$3,000 |

The critical nuance: AI tools compress the building part of validation, not the learning part. You still need 15–25 customer interviews. You still need 2 weeks of landing page traffic to get statistically meaningful data. You still need to watch real users struggle with your prototype. The tools make it cheaper and faster to create the artifacts - they do not make it faster to learn from them.

How to use AI tools in your validation process

- Landing page test: Use v0 or Bolt to generate a landing page with your value proposition, pricing, and a waitlist form. Deploy to Vercel or Netlify in minutes. Run $200–$500 in targeted ads for 2 weeks.

- Fake door test: Build a realistic product interface with Lovable or v0. Include the feature you want to validate behind a “coming soon” button. Measure click-through rate on that button.

- Functional prototype: Generate a working app with Lovable or Bolt that demonstrates your core workflow. Put it in front of 10–20 users from your interview pool. Watch them use it. The feedback from a functional prototype is qualitatively different from feedback on a mockup.

- Pricing validation: Build two versions of your landing page with different price points. Split traffic between them. Measure conversion rate difference. AI tools make this A/B test trivial to set up.

Key takeaway: AI tools have cut the cost of validation artifacts by 60–80%, but the learning timeline is unchanged. Use the savings to run more experiments, not to skip steps. The founders who validate fastest in 2026 are the ones who build prototypes in days and spend weeks learning from user behavior.

When to stop validating and start building

Validation paralysis is real. At some point, you have enough evidence to make a decision. Here is the framework.

Something I had to learn the hard way: Over-validation is a real thing. After 25 interviews and a waitlist of 200+, additional validation is procrastination disguised as diligence. At some point, the only way to reduce remaining risk is to build something and ship it.

The validation checklist

You are ready to build when you can check all five:

- Problem confirmed: 80%+ of interview subjects confirm the problem is real, frequent, and painful

- Solution direction validated: Prototype feedback or solution-specific interview responses confirm your approach addresses the pain

- Demand signal exists: Waitlist of 100+ or landing page conversion above 5% from targeted traffic

- Willingness to pay confirmed: At least 5 interview subjects named a price near your target, or you have pre-sale deposits

- Market size sufficient: Bottom-up calculation supports your business model

I’ve been there. If you have 4 of 5, you probably have enough to start an MVP. If you have 3 or fewer, keep validating.

What to build first

After validation, do not build a full SaaS product. Build an MVP that solves the core workflow for your highest-intent validated users. For MVP planning, read How to Build an MVP and How much does an MVP cost in 2026?.

The cost of over-validating

Validation has diminishing returns. After 25 interviews, 200 waitlist signups, and a willingness-to-pay signal, additional validation is procrastination disguised as diligence. The remaining risk can only be resolved by building and shipping.

If validation takes more than 8 weeks, you are either:

- Not finding evidence (red flag - reconsider the idea)

- Finding evidence but afraid to commit (yellow flag - acknowledge the fear and ship)

- Running validation without a clear framework (process issue - use the checklist above)

Key takeaway: Stop validating when you have evidence across all five checkpoints. Remaining risk is resolved by building an MVP, not by conducting interview number 30. Over-validation is a common form of founder procrastination.

FAQ

How long should SaaS idea validation take?

A thorough SaaS validation process takes 4-8 weeks. Week 1-2: customer discovery interviews (15-25 interviews). Week 3-4: landing page test with paid traffic. Week 5-6: analyze results, run willingness-to-pay experiments. Week 7-8: synthesize findings and make a build/no-build decision. If validation takes more than 8 weeks, you are either overthinking it or not getting clear signals, which itself is a signal worth paying attention to.

How many customer interviews do I need before building?

I’d aim for 15-25 interviews. Below 15, your sample is too small to identify reliable patterns - one or two enthusiastic interviews can create false confidence. Above 25, you typically see diminishing returns and the same themes repeating. If 80%+ of your interviews confirm the problem is real, frequent, and painful, problem validation is complete. You still need solution validation before building.

Can I validate a SaaS idea without spending money?

Yes, but it takes longer. Free validation methods include LinkedIn cold outreach for interviews (5-10% response rate), posting in relevant online communities, building a landing page on a free tool, and driving organic social traffic. The trade-off is time: what takes 2-3 weeks with $1,000 in paid traffic takes 6-8 weeks organically. If your time is worth more than $1,000, paid validation is more efficient.

What if interviews are positive but my landing page doesn’t convert?

This, usually means your messaging is wrong, not your idea. Interview subjects understood the value because you explained it in conversation. Your landing page needs to deliver that same clarity in 10 seconds of scanning. Rewrite your headline to focus on the specific pain point that resonated most in interviews, use their exact language (not your product jargon), and A/B test different angles. If conversion stays below 3% after 3-4 messaging iterations, reconsider whether the pain is acute enough to drive action.

How do I know if my market is big enough?

Use bottom-up market sizing: count the number of people or companies in your specific ICP, estimate what percentage you can realistically reach (10-20%), apply a conversion rate (1-3%), and multiply by your target price. If the result supports your business model - $500K+ ARR for a bootstrapped business, $10M+ for venture-backed - the market is large enough. Be skeptical of top-down market size claims like “the global SaaS market is $300 billion” - these tell you nothing about your specific opportunity.

Should I validate with a prototype or just interviews and landing pages?

It depends on product complexity. For products with a straightforward value proposition (invoicing, scheduling, project management), interviews and landing pages are sufficient for validation. For products with novel or complex value propositions (AI-powered analysis, specialized workflows, new interaction models), a clickable prototype helps solution validation because users cannot evaluate what they cannot imagine. A Figma prototype costs $2,000-$5,000 and takes 1-2 weeks - significantly cheaper than building the wrong product.

Ready to validate? Start with 5 customer interviews this week. If 4 out of 5 describe the same painful workaround, you’re onto something. See how we build MVPs after validation →