Table of contents

- Quick answer: the 9 MVP mistakes that waste the most money

- 2026 MVP failure benchmarks: the numbers founders don't check

- Mistake 1: Building too much

- Mistake 2: Skipping validation

- Mistake 3: Choosing the wrong tech stack

- Mistake 4: No analytics from day one

- Mistake 5: Perfectionism over shipping

- Mistake 6: Ignoring user feedback

- Mistake 7: Underestimating costs

- Mistake 8: No go-to-market plan

- Mistake 9: Wrong development partner

- The cost of getting it right vs. getting it wrong

- A pre-launch checklist to avoid all nine mistakes

- Related reading

$94,000 on an MVP that nobody used. Not because the idea was bad - but because the founder made five of the nine mistakes on this list.

They built too many features, chose an exotic tech stack their agency recommended (but couldn’t maintain cheaply), launched without analytics, and had no plan for getting users after launch. The painful part: their core idea was solid. A simpler version - 60% less scope, standard tech, proper tracking - would have cost $35,000 and launched three months earlier. They’d have real user data by now instead of a polished product gathering dust.

The mistakes below aren’t theoretical - they’re the specific, recurring errors that blow up MVP budgets and timelines in 2026. Each one comes with what to do instead.

Quick answer: the 9 MVP mistakes that waste the most money

| Mistake | Typical cost of the mistake | How common |

|---|---|---|

| Building too much | $20K-$80K+ in unnecessary features | Very common |

| Skipping validation | Entire budget (building something nobody wants) | Common |

| Choosing the wrong tech stack | $15K-$40K in rewrites or vendor lock-in | Common |

| No analytics from day one | Months of directionless iteration | Very common |

| Perfectionism over shipping | 2-4 months of delayed learning | Common |

| Ignoring user feedback | $10K-$30K building the wrong improvements | Moderate |

| Underestimating costs | 40-100% budget overrun | Very common |

| No go-to-market plan | Zero users despite a working product | Common |

| Wrong development partner | $20K-$60K in rework or abandoned code | Moderate |

Key takeaway: Most founders don’t fail because of a bad idea - they fail because of execution mistakes that inflate costs, delay launch, and prevent learning. Fix these nine and you dramatically improve your odds.

For context on the right way to approach MVP development, see How to Build an MVP: Complete Guide for Founders and SMB Owners.

2026 MVP failure benchmarks: the numbers founders don’t check

Before you commit a budget, these data points change how you scope:

| Benchmark | 2026 data point | Source |

|---|---|---|

| Percent of startups that fail due to “no market need” | 42% - the single largest failure cause | CB Insights startup failure research (stable methodology) |

| Typical MVP budget overrun | 40–100% above initial estimate | Industry MVP cost benchmarks, 2026 |

| AI-assisted MVP cost reduction vs traditional agency | 40–60% on comparable scope | Codivox composite data, 2025–2026 |

| Median time-to-first-paying-customer for disciplined MVPs | 8–14 weeks from build start | Y Combinator MVP patterns, 2026 |

| Percent of MVPs launched without analytics instrumented | Over 50% in practice | Observed across audits |

| Percent of feature scope that typically isn’t used post-launch | 60–80% of initially prioritized features | Standish Group CHAOS research (historical, still widely cited) |

The 2026 pattern that’s changed the economics: AI-assisted delivery (tools like Cursor, Lovable, and Kiro reviewed by senior engineers) has cut realistic MVP budgets for well-scoped projects from $80K–$150K (traditional agency) to $35K–$75K. That means the “building too much” mistake hurts twice - the overbuild costs more, and the opportunity cost (shipping later) is larger because competitors now ship faster too.

Key takeaway: The biggest MVP risk in 2026 isn’t the cost of the build - it’s the cost of the delay. Every week spent on unneeded features is a week competitors spend learning from real users.

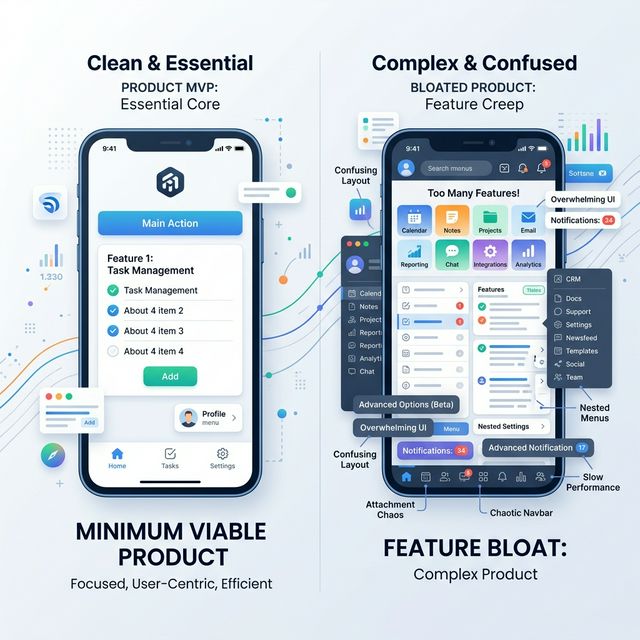

Mistake 1: Building too much

I’ll be blunt: Every founder thinks their product is the exception. “We really do need all 30 features.” You don’t. I have never seen an MVP fail because it had too few features. I have seen dozens fail because they had too many.

This is the most common and most expensive mistake, and I’ve watched it destroy budgets more times than I can count. It manifests as:

- A feature list of 30+ items for “version one”

- “While we’re at it, let’s also add…”

- Building admin dashboards, reporting, and settings before anyone uses the core product

- Designing for scale before you have 10 users

Why it happens

Founders confuse an MVP with a “scaled-down version of the full product.” They’re not the same thing (understanding the difference between an MVP, Prototype, and Proof of Concept is crucial here). An MVP tests one hypothesis. A scaled-down product tries to do everything, just less polished.

What it costs

Every unnecessary feature adds 1-3 weeks of development time. At typical agency rates ($150-$250/hour), that’s $6,000-$30,000 per feature - plus ongoing maintenance costs.

A 30-feature MVP at $10K average per feature = $300,000. A 7-feature MVP testing the same core hypothesis = $70,000.

What to do instead

Apply the “one workflow” test: Can a user complete the single most important action in your product? If yes, you have enough features. Everything else is V2.

Read our prioritization guide for specific frameworks: How to Prioritize Features for Your MVP.

Key takeaway: Every feature you don’t build saves $6,000-$30,000 in development costs and 1-3 weeks on your timeline. Apply the “one workflow” test ruthlessly.

Mistake 2: Skipping validation

Building before validating is like printing 10,000 copies of a book before anyone reads the first chapter. I know that sounds obvious, but I still see funded founders skip this step every month.

Signs you’re skipping validation

- Your evidence for demand is “everyone I talked to said it’s a great idea” (politeness is not validation)

- You haven’t found anyone willing to pre-pay or sign a letter of intent

- Your market research is “I Googled it and there’s no competitor” (that’s usually a bad sign, not a good one)

- You can’t describe your target user’s current painful workaround in detail

What it costs

The entire MVP budget - potentially $30,000-$150,000 - spent building something nobody will pay for.

What to do instead

Before writing a single line of code, validate:

- Problem validation: Can you find 10 people who describe the same problem unprompted?

- Solution validation: Will 3 of those people pay (or commit to pay) for a solution before it exists?

- Channel validation: Can you reach your target users through a repeatable channel?

If you can’t pass all three, your MVP budget is better spent on validation than development.

For a detailed validation process, see How to Validate Your SaaS Idea Before Building.

Mistake 3: Choosing the wrong tech stack

The tech stack decision seems technical, but it’s actually a business decision with massive cost implications. (This often stems from a lack of clarity on whether you’re building traditional SaaS vs Custom Software).

Building your first product? See how we approach MVP development → - we’ll tell you what to cut.

Common wrong-stack scenarios

| Scenario | What happens | Cost impact |

|---|---|---|

| Exotic/bleeding-edge stack | Hard to find affordable developers later | 2-3x ongoing costs |

| Enterprise stack for a simple product | Over-engineered, slow to iterate | $20K-$40K unnecessary upfront |

| No-code when you need custom logic | Hit platform limits at 6 months, rebuild | Full rebuild cost |

| Custom everything when tools exist | Building solved problems from scratch | $15K-$30K wasted |

Why it happens

Three reasons: (1) the agency recommends what they know best, not what’s best for you, (2) the founder or CTO picks “cool” technology over boring-but-proven options, or (3) nobody asks “What happens when we need to hire a second developer?”

What to do instead

Choose tech based on three criteria:

- Developer availability: Can you hire for this stack at a reasonable rate?

- Speed of iteration: Can your team ship changes in days, not weeks?

- Maturity: Are there well-documented solutions for common problems (auth, payments, email)?

For most B2B MVPs in 2026, that means: Next.js or a similar React framework, PostgreSQL, a standard cloud provider, and third-party services for auth, payments, and email. Not exciting. Very effective. Martin Fowler’s guidance on technology selection reinforces this: optimize for learning speed, not technical sophistication.

Key takeaway: The best MVP tech stack is the most boring one that solves your problem. Exotic choices increase hiring costs, slow down iteration, and create vendor lock-in.

Mistake 4: No analytics from day one

“We’ll add tracking later” is one of the most expensive sentences in MVP development.

What you lose without day-one analytics

- You can’t tell which features people actually use

- You can’t measure activation (what % of signups complete the core action?)

- You can’t identify drop-off points in your workflow

- You make iteration decisions based on gut feelings instead of data

- You can’t show traction to investors (they ask for numbers, which means you need to know your essential SaaS metrics and KPIs)

What it costs

Not dollars directly - but months of misdirected development. Without analytics, founders build what they think users want instead of what data shows users actually do. That’s typically 2-4 months and $20,000-$60,000 in misguided features.

What to do instead

At minimum, instrument these on launch day:

| What to track | Why it matters | Tool examples |

|---|---|---|

| Sign-up completion rate | Measures initial interest-to-activation | PostHog, Mixpanel |

| Core action completion | Validates your key hypothesis | PostHog, Amplitude |

| Feature usage frequency | Shows what people actually use | PostHog, Heap |

| Drop-off points | Identifies where users give up | PostHog, Hotjar |

| Retention (Day 1, 7, 30) | Proves lasting value | PostHog, Mixpanel |

This takes 2-3 days of dev time to set up properly. I’d budget for it - it’s not optional.

Key takeaway: Without analytics, you’re flying blind. Budget 2-3 days of development time and set up event tracking before you launch - not after.

Mistake 5: Perfectionism over shipping

“Let’s polish the onboarding flow one more time” is the MVP version of procrastination.

Something I had to learn the hard way: Perfectionism in MVP development is procrastination wearing a lab coat. The product will never feel ready. Ship it when the core workflow works, not when every edge case is handled.

How perfectionism sneaks in

- Pixel-perfect design iterations when users haven’t even tried the core workflow

- Rewriting code for “cleanliness” before anyone uses the product

- Waiting to launch until the product is “ready” (it’s never ready)

- Spending 3 weeks on edge cases that affect 1% of users

What it costs

Every week of polishing is a week of delayed learning. At typical burn rates, that’s $5,000-$15,000 per week - plus the opportunity cost of not having real user data sooner.

The 80/20 rule for MVPs

If the core workflow works, ship it. I can tell you from experience, you’ll learn more from 10 real users in one week than from 10 more weeks of internal polish.

Things that can be imperfect in an MVP:

- Visual design (functional > beautiful)

- Edge case handling (cover the happy path, log the errors)

- Onboarding (a Loom video is fine for now; you can design a full SaaS onboarding flow later)

- Admin tools (you can use a database GUI)

- Settings and customization (pick sensible defaults)

Things that must work well:

- The core workflow (the one thing the product does)

- Data integrity (don’t lose or corrupt user data)

- Security basics (auth, encryption, input validation)

Mistake 6: Ignoring user feedback

Some founders build the MVP, ship it, then go back to building what they planned instead of what users asked for.

Signs you’re ignoring feedback

- You have a roadmap that hasn’t changed since before launch

- Users ask for something and you say “that’s planned for V3”

- You interpret “I don’t use feature X” as “they don’t understand feature X”

- Your post-launch development looks exactly like your pre-launch plan

What to do instead

After launch, your roadmap should change every two weeks based on what you learn. Create a simple feedback loop:

- Collect: Talk to 5 users per week (calls, not surveys).

- Categorize: What do they love, tolerate, and complain about?

- Prioritize: What change would improve retention for the most users?

- Ship: Build that one thing. Repeat.

Key takeaway: Your post-launch roadmap should look nothing like your pre-launch plan. If it does, you’re building for yourself, not your users.

Mistake 7: Underestimating costs

The initial development quote is only part of the picture.

Where cost underestimates come from

| What founders budget | What actually costs money |

|---|---|

| Development hours | Development hours + project management + QA + deployment |

| Feature build cost | Build cost + third-party service fees + infrastructure |

| Launch budget | Launch + 3 months of post-launch iteration |

| One-time expense | Ongoing hosting, monitoring, maintenance |

The real math

If your development quote is $50,000, your realistic total budget for year one should be:

- Development: $50,000

- Post-launch iteration (3 months): $15,000-$25,000

- Infrastructure and third-party services: $3,000-$8,000/year

- Bug fixes and maintenance: $5,000-$10,000

- Realistic total: $73,000-$93,000

That’s 46-86% more than the initial quote.

For detailed cost breakdowns, see MVP Development Cost in 2026: Founder Pricing Guide.

Key takeaway: Budget 50-80% more than your development quote for the full first-year cost. The build is only 60-70% of total spend.

Mistake 8: No go-to-market plan

“If we build it, they will come” has a 0% success rate for MVPs.

What a minimum viable go-to-market looks like

You don’t need a marketing team. You need answers to three questions before launch:

- Where are your target users already gathering? (Communities, forums, social platforms, events)

- How will you get 50 people to try it in the first two weeks? (Personal outreach, community posts, beta invites)

- What’s your feedback collection mechanism? (Calls, in-app surveys, Slack channel)

Common GTM mistakes for MVPs

- Relying entirely on Product Hunt, Hacker News, or a single launch event

- Building a landing page with a waitlist and assuming the waitlist = demand

- Spending money on paid ads before the product converts organically

- Waiting until the product is “finished” to think about distribution

What to do instead

Start outreach to potential users 4-6 weeks before your MVP launches. Build a small community of 20-50 interested people who’ve agreed to try it when it’s ready. Have 10 demo calls scheduled for launch week.

Mistake 9: Wrong development partner

The cheapest quote is almost never the cheapest outcome. I’ve seen this pattern enough to be blunt about it.

Red flags in MVP agencies/freelancers

| Red flag | What it usually means |

|---|---|

| Quote is 50%+ below other quotes | They’ll make it up in change orders or cut corners |

| No questions about your business goals | They build features, not products |

| ”Yes” to everything on your feature list | They’re not thinking about what you actually need |

| No dedicated project manager | You’ll spend 10+ hours/week managing them |

| No post-launch support plan | You’ll need another agency for bugs and iterations |

| Portfolio shows only design, not shipped products | They build prototypes, not MVPs |

What a good partnership looks like

- They push back on your feature list (and explain why)

- They ask about your success metrics and validation status

- They propose phased delivery with checkpoints

- They include analytics setup in their standard process

- They have a clear post-launch support model

- They’ve built products in your general domain before

For a detailed evaluation framework, see How to Hire an MVP Development Agency.

Key takeaway: The cheapest development quote almost always becomes the most expensive outcome. Evaluate partners on their process, questions, and post-launch support - not just their price.

The cost of getting it right vs. getting it wrong

Here’s the real math, using a standard B2B SaaS MVP as an example:

| Factor | Getting it wrong (multiple mistakes) | Getting it right |

|---|---|---|

| Features built | 25-35 | 7-10 |

| Development cost | $80,000-$150,000 | $35,000-$65,000 |

| Time to launch | 5-8 months | 8-14 weeks |

| Post-launch rebuild needed? | Usually yes ($20K-$50K) | Minor iterations ($10K-$20K) |

| Time to first user insight | 6-9 months | 10-16 weeks |

| Total year-one spend | $120,000-$220,000 | $55,000-$95,000 |

The difference isn’t marginal - it’s 2-3x in cost and twice the time. And the “getting it right” path produces better user insights because you launch earlier with a testable hypothesis.

A pre-launch checklist to avoid all nine mistakes

Before you approve your MVP development plan, confirm:

- Feature list has fewer than 12 items, and each one connects to your core hypothesis

- You’ve validated the problem with at least 10 potential users

- At least 2-3 people have pre-committed (paid, signed LOI, or equivalent)

- Tech stack uses mature, widely-adopted tools

- Analytics is in the development scope (not “we’ll add it later”)

- Design scope is “functional and clear,” not “pixel-perfect and polished”

- Budget includes 30-50% reserve for post-launch iteration

- You have a plan to get 50 users in the first two weeks

- Your development partner has asked more questions than you have

If you can check all nine boxes, you’ve avoided the most expensive mistakes before writing a single line of code.

FAQ

What’s the most expensive MVP mistake?

Building too much - by far. Excess features can add $40,000-$100,000 to a project that should have cost $35,000-$60,000. They also delay your launch by months, which means months of delayed learning and wasted burn.

How do I know if I’m building too much for an MVP?

If your feature list is longer than 10-12 items, or your timeline is longer than 12-16 weeks, you’re almost certainly building too much. Ask for each feature: “Can a user complete the core workflow without this?” If yes, cut it.

Is it okay to use no-code tools for an MVP?

Yes - if your core workflow fits within the platform’s capabilities and you understand the limitations. No-code works well for simple CRUD apps, landing pages, and internal tools. It struggles with complex logic, custom integrations, and anything requiring real-time features. I always ask: “What happens when we outgrow this platform?”

How much should I budget beyond the initial development quote?

Plan for 50-80% beyond the initial quote for year one. This covers post-launch iterations, bug fixes, infrastructure, third-party services, and maintenance. If your quote is $50,000, budget $75,000-$90,000 total.

When should I pivot vs. iterate on my MVP?

Iterate when users engage with your core workflow but want it improved. Pivot when users don’t engage with the core workflow at all - or when the problem you’re solving isn’t painful enough to change behavior. Give yourself 6-8 weeks of post-launch data before making the pivot decision.

How do I avoid scope creep during development?

Define your MVP scope in writing before development starts. Create a “Version 2 Board” for all new ideas. Require any scope changes to go through a formal review with documented cost and timeline impact. If your agency says “no problem, we’ll just add it,” that’s a red flag - they should be quantifying the impact.

Related reading

- How to Build an MVP: Complete Guide for Founders and SMB Owners

- MVP Development Cost in 2026: Founder Pricing Guide

- How to Hire an MVP Development Agency

The nine mistakes on this list account for the majority of MVP budget waste we see. Fix them before you start building, and you’ll spend less, launch faster, and learn what actually matters.

One action this week. Open your feature list. For each item, ask: “Can a user complete the core workflow without this?” If yes, move it to v2. See how we scope MVPs →