SLO

An infrastructure monitoring platform was trapped by its own success: 8 years of growth built on code nobody dared touch. A phased modernization approach helped them ship faster without breaking the 99.95% uptime promise their enterprise customers depended on.

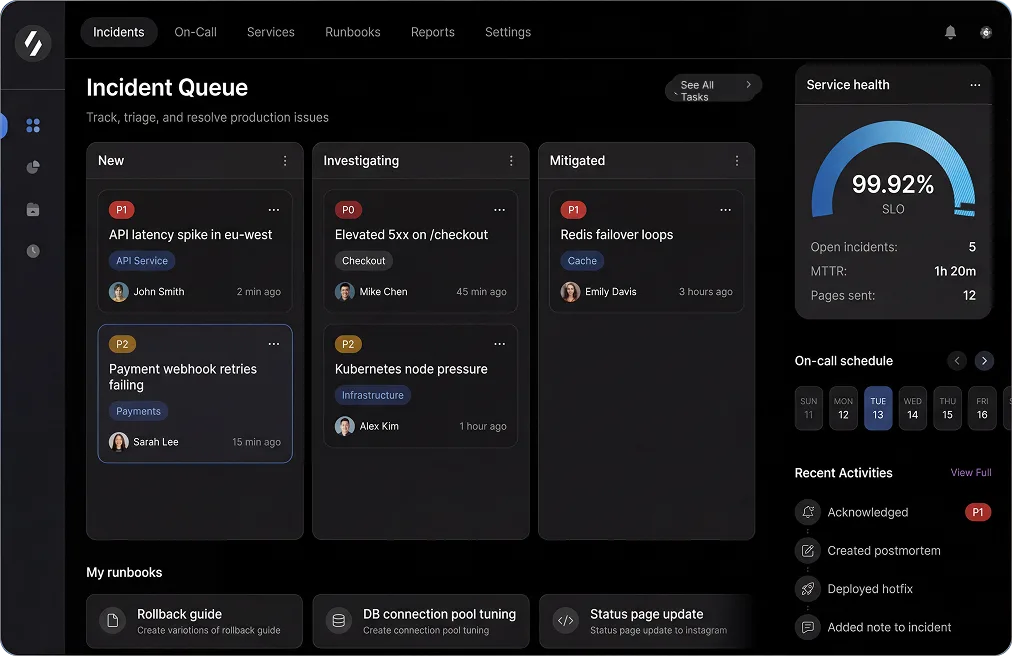

The featured visual reflects the experience designed and built for this project.

Staff

Codivox Editorial Team

The codebase everyone was afraid to change

Marcus Chen, SLO's Engineering Lead, showed us a Slack thread that had become infamous inside the company. Subject: 'Can we upgrade Node.js?' The thread had 47 replies spanning 6 months, and the answer was still 'maybe.' Not because the team was incompetent - they were excellent engineers. But because the core monitoring service was a 200K-line monolith written by 15 different developers over 8 years, with test coverage hovering around 40% and a reputation for mysterious side effects. "Every time we touch it," Marcus told us, "something breaks in production. So we just... stopped touching it."

Scenario

SLO provided real-time infrastructure monitoring for 300+ enterprise customers, many with contractual 99.95% uptime SLAs. The platform worked - mostly. But underneath, the codebase was a minefield. Critical services mixed three different coding patterns, tests were sparse and brittle, and nobody could confidently answer 'what will break if we change this?' The result: dependency upgrades were postponed indefinitely, security patches took weeks to deploy, and new features required heroic workarounds to avoid touching legacy code. Meanwhile, competitors with modern stacks were shipping faster. The moment of truth: In January 2025, their security team flagged a critical vulnerability in an outdated dependency. The fix was simple - but it required touching the auth module. Six weeks later, the patch still wasn't deployed because nobody was confident it wouldn't break authentication for 300 enterprise customers. Marcus knew they couldn't keep operating this way.

Challenge

- •Critical modules had 40% test coverage with tests that passed even when the code was broken - engineers had zero confidence in the test suite.

- •A routine dependency upgrade in Q3 2024 caused a 4-hour outage affecting 80 customers because of an undocumented side effect in the alerting pipeline.

- •Incident response consumed 35% of engineering capacity - the team spent more time firefighting than building.

- •The company had postponed 14 security patches because 'we're not sure what will break' - a growing compliance risk.

What was designed and built

- Started with a 'risk map' of the entire codebase using git history, production incident logs, and engineer interviews - we identified the 12 modules that caused 80% of incidents and focused there first.

- Backfilled tests using a 'characterization testing' approach: instead of testing what the code should do, we tested what it actually does, then refactored with confidence that behavior wouldn't change.

- Split large refactors into 'guarded slices' with feature flags and rollback checkpoints - every change could be reverted in under 60 seconds if metrics degraded.

- Defined incident ownership with explicit SLAs: every incident got an owner within 15 minutes, a postmortem within 48 hours, and action items linked to sprint planning with due dates.

The alert that cried wolf (and how we fixed it)

At 2:47am on a Tuesday, the on-call engineer got paged: 'High API latency detected.' She opened the dashboard, saw a spike, then spent 20 minutes digging through logs before realizing it was a false alarm - a single slow customer query, not a systemic issue. By 3:15am she was back in bed, but too frustrated to sleep. "This happens 3-4 times per week," she told us the next day. "Half our alerts are noise. The other half don't tell us what's actually broken." The team could detect incidents, but they couldn't diagnose them - and that was costing them hours per incident.

Scenario

SLO's monitoring platform was built to monitor infrastructure, but ironically, their own observability was a mess. They had alerts - lots of them. But alert volume had grown organically over 8 years without a coherent strategy. Some alerts fired on symptoms (high latency), others on causes (database connection pool exhausted), and many fired on conditions that weren't actually user-impacting. Meanwhile, distributed traces were incomplete, so when something did break, engineers had to manually correlate logs across 12 services to find the root cause. Median time to triage an incident: 79 minutes. For a company selling uptime, that was embarrassing.

Challenge

- •Alert noise: 60% of pages were false positives or low-severity issues that didn't require immediate action - on-call engineers were burning out.

- •Incomplete traces: only 40% of critical request paths had end-to-end tracing, so root cause analysis was manual detective work.

- •Inconsistent postmortems: some teams wrote detailed postmortems with action items, others wrote 'fixed it' and moved on - no organizational learning.

- •No visibility into change risk: engineers deployed code with fingers crossed, hoping metrics wouldn't degrade.

What was designed and built

- Rebuilt the alerting strategy around user impact: alerts only fired when customers were affected, not when internal metrics looked weird - reduced alert volume by 60% while improving incident detection.

- Implemented service-level dashboards aligned to SLO error budgets - every service had a clear 'health score' based on customer-facing metrics, not internal implementation details.

- Added end-to-end distributed tracing for the top 20 transaction paths (covering 95% of customer traffic) - root cause analysis went from 'grep through logs' to 'click on the slow span.'

- Linked postmortem action items directly to sprint planning with explicit owners and due dates - no more 'we should fix this someday' without accountability.

Key Wins

- •"I used to dread on-call. Now I actually sleep through most nights because the alerts that wake me up are real problems I can fix." - Senior SRE

- •Mean time to triage dropped from 79 minutes to 49 minutes - engineers spent less time investigating and more time fixing.

- •Postmortem action item completion rate went from 40% to 85% - the team stopped repeating the same incidents.

- •"For the first time, we can deploy on Friday without anxiety. We see exactly what changed and what impact it had." - Engineering Manager

- •The skeptic's transformation: A senior engineer who'd said 'We've tried to improve test coverage before and failed' became the team's testing advocate after seeing characterization tests catch 3 critical bugs before they hit production. His quote: 'I didn't believe tests could work for legacy code. I was wrong.'

What 6 months of careful modernization delivered

The transformation wasn't dramatic - it was deliberate. No big-bang rewrites, no risky migrations, just steady progress on the things that mattered: test coverage, observability, and team confidence. The result? SLO didn't just modernize their codebase - they modernized how they work.

| Metric | Before | After | Impact |

|---|---|---|---|

| Median incident resolution time | 79 min | 49 min | 38% faster resolution - customers experience shorter outages |

| p95 API latency | 610 ms | 445 ms | 27% faster response - better experience for enterprise customers |

| Release regressions | 20 per quarter | 11 per quarter | 45% reduction - deployments are safer and more predictable |

| Test coverage (critical paths) | 40% | 78% | Engineers can refactor with confidence instead of fear |

"We're not done modernizing - but we're no longer afraid to start. That mindset shift is worth more than any single technical improvement." - Marcus Chen. The company now ships features at competitive speed, maintains their 99.95% uptime promise, and engineers no longer treat the codebase like a haunted house. The ripple effect: Improved confidence in the codebase enabled SLO to finally tackle the features they'd been postponing for 2 years, including a major API redesign that became their biggest competitive differentiator in enterprise deals.

More success stories

Explore more stories covering challenge, design/build execution, and outcomes.

Playbooks for shipping faster

Practical guides on AI-assisted development, MVP execution, and building production-ready software — delivered to your inbox.