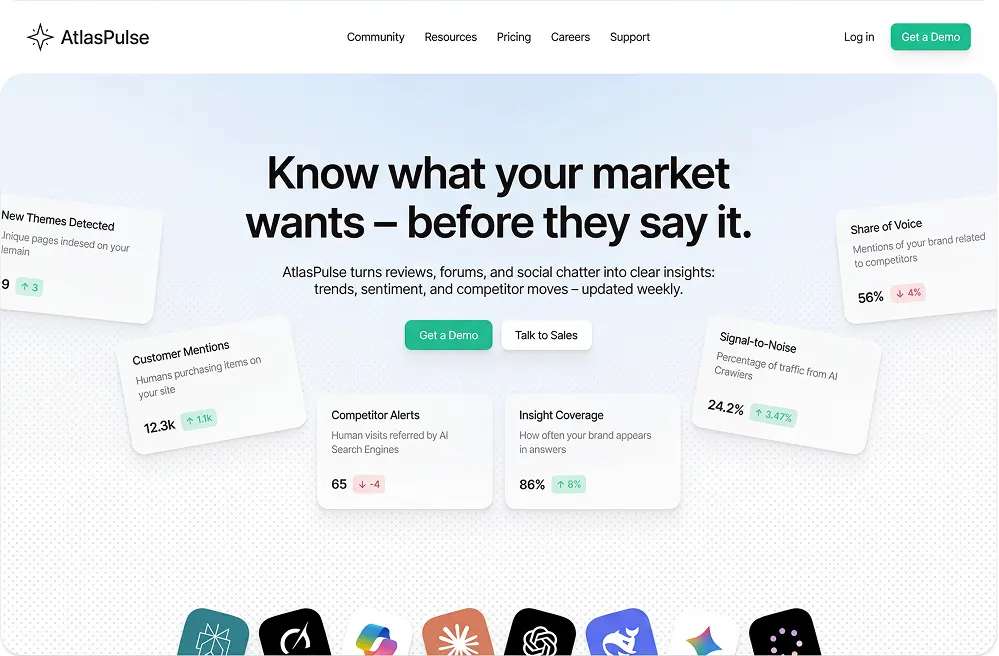

AtlasPulse

How a market intelligence platform processing 2M+ reviews per day cut release time by 44% and eliminated weekend firefighting by fixing what most teams ignore: the invisible handoffs between squads.

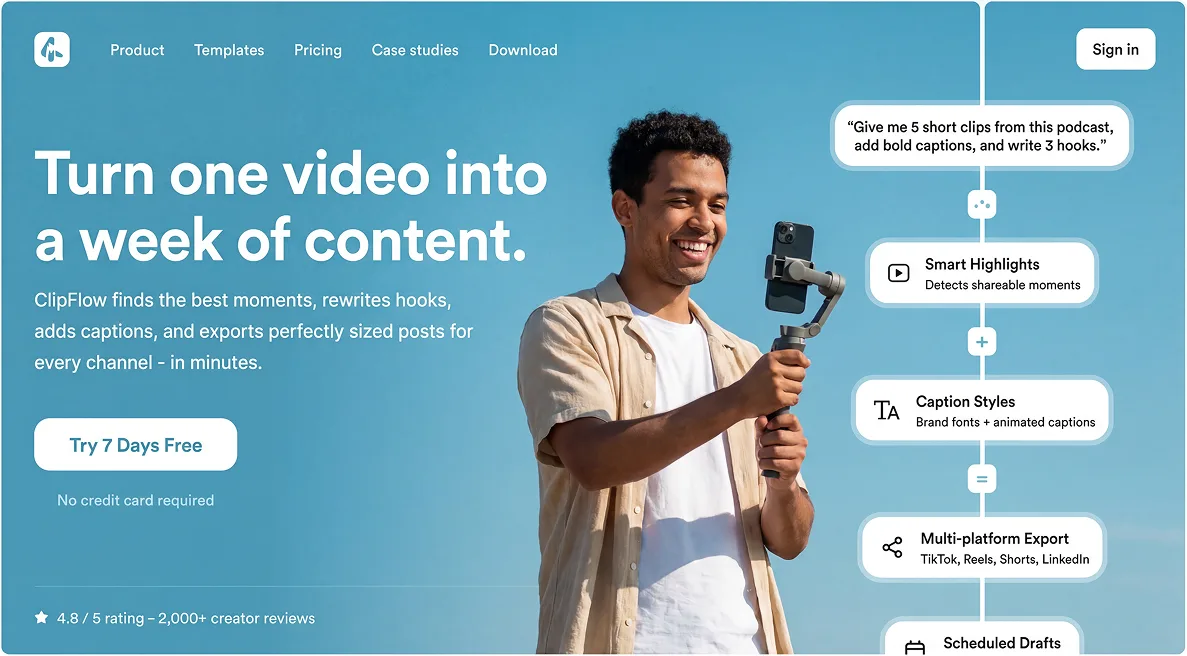

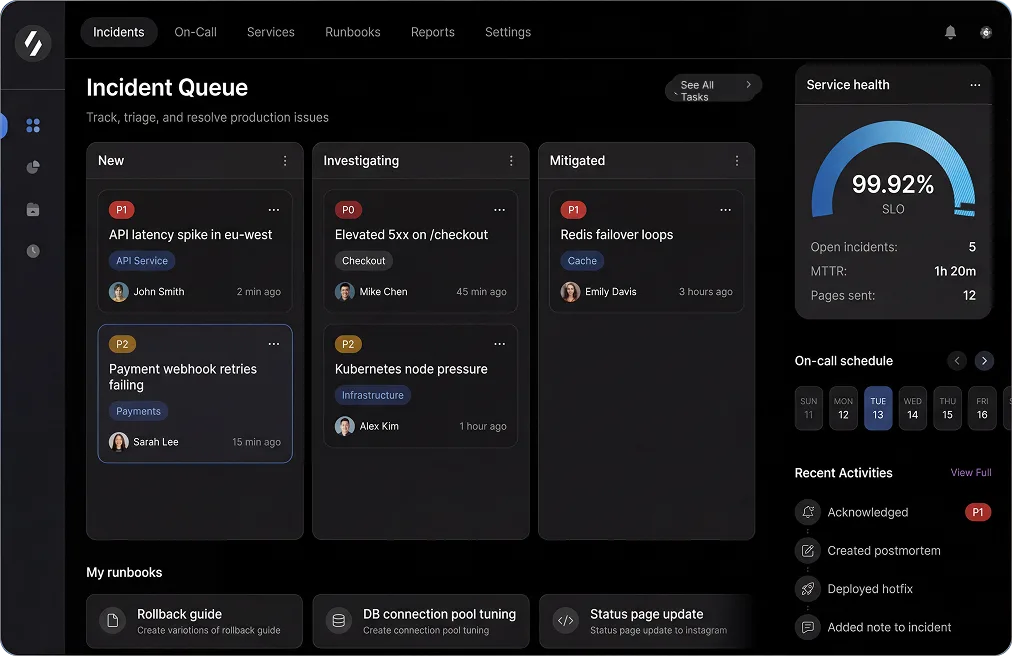

The featured visual reflects the experience designed and built for this project.

Staff

Codivox Editorial Team

The Friday night pattern nobody wanted to admit

Talia Nguyen, AtlasPulse's Head of Product, noticed something troubling: every Friday at 4pm, the same three engineers would quietly cancel their evening plans. Not because of a major incident, but because nobody knew who owned the release going out that night. "We had four talented squads shipping to one platform," she told us, "but we were operating like four separate companies. The gaps between teams were killing us."

Scenario

AtlasPulse turns reviews, forums, and social chatter into competitive intelligence for product teams—processing over 2 million data points daily to surface trends, sentiment, and competitor moves before they become obvious. Their platform was growing fast, serving 200+ enterprise customers who depended on real-time insights to make product decisions. But each of their four product squads had evolved different deployment rituals, different definitions of 'ready to ship,' and different ideas about who should stay online after a Friday release. The result? Releases that should take hours were taking 9+ days, and every deployment felt like a gamble. The breaking point came in November 2024: a botched Friday release caused a 6-hour data processing delay, meaning customers missed critical competitor alerts during a major industry event. A Fortune 500 customer's product VP called Talia directly: 'We're evaluating alternatives. We can't have blind spots when the market is moving.' That call changed everything.

Challenge

- •PR review queues regularly blocked releases for 2-3 days because no squad had visibility into others' priorities—data pipeline improvements sat waiting while UI tweaks got merged first.

- •No common release readiness checklist—one squad checked data processing impact, another didn't, leading to 3 rollbacks in one month that caused customer alert delays.

- •Hotfix ownership was a game of Slack tag after Friday deployments, with critical sentiment analysis dashboards showing stale data for hours while teams figured out who should respond.

- •The team shipped 13 emergency hotfixes per month, each one eroding trust with enterprise customers who needed reliable, real-time market intelligence.

What was designed and built

- Introduced a shared release train cadence with an 'owner-of-the-week' rotation—one engineer from any squad became the release captain, with authority to block or greenlight any change based on data processing impact.

- Created a mandatory 12-point readiness checklist covering QA sign-off, data pipeline validation, rollback procedures, monitoring alerts, and on-call handoff—no exceptions, even for 'small' fixes.

- Built a pre-release risk scoring system using git history and service dependency graphs, so high-risk changes to data ingestion or sentiment analysis automatically triggered earlier architecture review and pair programming sessions.

- Implemented 'release rehearsals' every Thursday where squads walked through their changes together, surfacing conflicts before they hit production—especially critical for changes affecting real-time data processing.

The planning ritual that changed everything

Fixing releases was just the symptom. The real problem was upstream: product and engineering were planning in parallel universes. "We'd start a sprint with confidence," one tech lead explained, "then by Wednesday we'd discover that the new competitor tracking feature needed changes to our data ingestion pipeline, which was already being refactored for the sentiment analysis update. By Friday we're scrambling." The solution wasn't more meetings—it was better timing and shared language around risk.

Scenario

Product managers would finalize roadmaps in Monday planning sessions, excited about new market intelligence features customers were requesting. Engineering would estimate and commit on Tuesday. By Wednesday, someone would discover that Feature A (new social media source integration) needed pipeline changes that blocked Feature B (enhanced sentiment scoring), which was already in progress. Scope would shift, QA would get squeezed, and critical bugs in data accuracy would surface after code complete when it was too expensive to fix properly. For a platform where data accuracy is the product, this was existential.

Challenge

- •Dependency discovery happened mid-sprint, causing an average of 22% story carryover every two weeks—new data sources took twice as long to ship as estimated.

- •QA was treated as a post-development gate instead of a planning partner, leading to late-stage data accuracy issues that should have been caught in design—one bug caused competitor alerts to miss 15% of mentions for 3 days.

- •Velocity metrics focused on story points completed, not delivery risk or data quality impact—teams were hitting their numbers while shipping features that occasionally produced inaccurate market insights.

- •Engineering leads spent 8+ hours per week in 'emergency' meetings to re-plan work that should have been scoped correctly from the start, time that could have been spent improving data processing performance.

What was designed and built

- Shifted to risk-first sprint planning where data pipeline dependencies were mapped and locked before any story was committed—if a dependency on ingestion, processing, or analysis couldn't be confirmed, the story didn't make the sprint.

- Brought QA into planning as equal partners with explicit entry criteria: no story could start development without defined test scenarios, data accuracy validation criteria, and acceptance criteria reviewed by QA.

- Replaced story-point velocity tracking with a release health dashboard showing delivery risk, dependency status, data quality metrics, and defect trends—leadership finally had signal instead of noise.

- Instituted weekly 30-minute release health reviews with product, QA, and engineering leads using a shared dashboard—no slides, just data and decisions about what ships next.

Key Wins

- •"For the first time in two years, I can tell our CEO exactly when a new data source integration will ship—and actually hit that date." - Talia Nguyen

- •Sprint carryover dropped from 22% to under 10%, and the team stopped treating carryover as normal—new competitive intelligence features shipped on schedule.

- •Emergency re-planning meetings disappeared entirely, giving engineering leads 8 hours per week back for actual engineering work—time they used to improve data processing speed by 30%.

- •Enterprise customers noticed the difference: "AtlasPulse releases used to make us nervous—would our alerts still work? Now they're boring, in the best possible way." - Product VP, Fortune 500 SaaS Company

- •The turning point: When a senior engineer who'd been skeptical of 'process overhead' volunteered to be release captain for the first time, saying 'I finally understand—this isn't bureaucracy, it's clarity. I can see exactly how my data pipeline change affects the sentiment analysis team.'

What changed in 10 weeks (and what it meant for the business)

The numbers tell part of the story. The other part is what didn't happen: no more weekend firefighting, no more surprise data delays to enterprise customers, no more engineers quietly burning out from unpredictable on-call chaos. AtlasPulse didn't just get faster—they got sustainable. And for a platform processing 2M+ data points daily, sustainability meant reliability customers could bet their product decisions on.

| Metric | Before | After | Impact |

|---|---|---|---|

| Median time-to-release | 9.1 days | 5.1 days | 44% faster delivery—new data sources and insights reach customers in days, not weeks |

| Post-release hotfixes | 13 per month | 9 per month | 31% reduction—fewer emergency patches, more trust from enterprise customers |

| Sprint carryover | 22% | <10% | Predictable planning—leadership can commit to roadmap dates with confidence |

| Weekend incidents | 2-3 per month | 0-1 per month | Engineers get their weekends back, retention improves, data processing stays reliable |

"We didn't just fix our release process—we fixed how our teams work together. That's the difference between shipping faster and shipping sustainably." - Talia Nguyen. The team now ships on a predictable cadence, enterprise customers trust their real-time market intelligence, and engineers no longer dread Friday afternoons. The unexpected ripple effect: AtlasPulse's improved release reliability became a competitive advantage in enterprise sales, with prospects specifically asking about their deployment process and data reliability during demos. One prospect said: 'Your competitors can't tell us when new features will ship. You just showed us your release calendar for the next quarter. That's the confidence we need.'

More success stories

Explore more stories covering challenge, design/build execution, and outcomes.

Playbooks for shipping faster

Practical guides on AI-assisted development, MVP execution, and building production-ready software — delivered to your inbox.